SGDraw: Scene Graph Drawing Interface using Object-Oriented Representation

Published in 25th International Conference on Human-Computer Interaction (HCI International 2023), Denmark, 2023

Abstract:

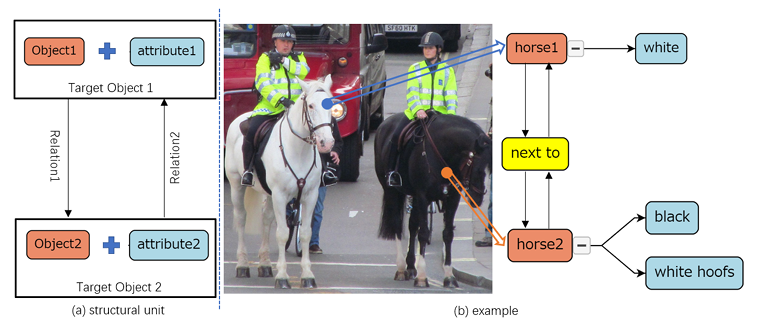

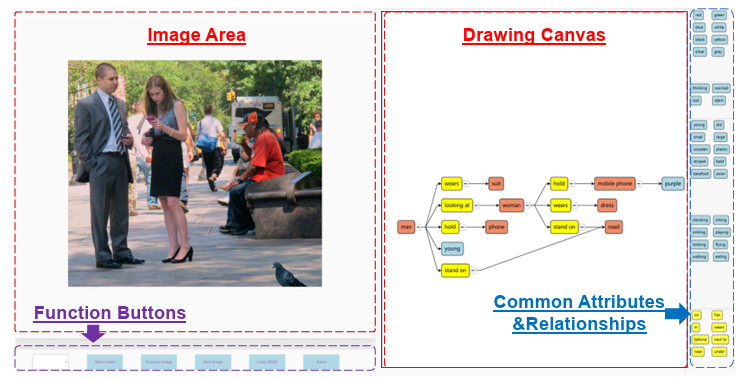

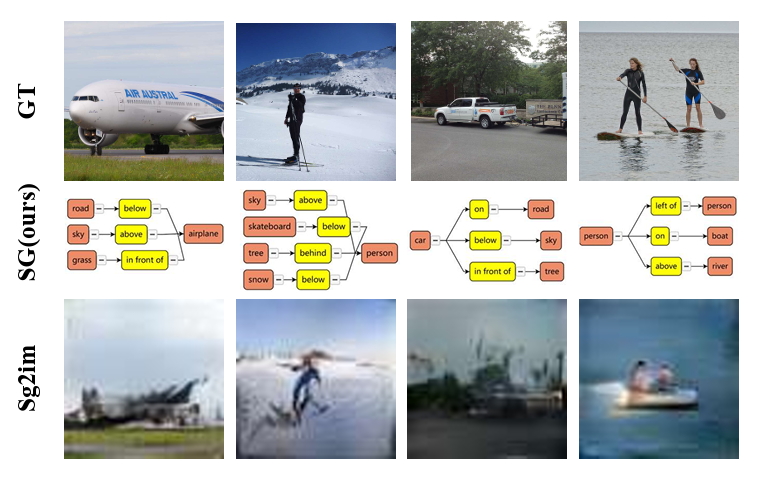

Scene understanding is an essential and challenging task in computer vision. To provide the visually- grounded graphical structure of an image, the scene graph has received increased attention due to offering explicit grounding of visual concepts. Previous works commonly get scene graphs by using ground-truth annotations or generating from the target images. However, drawing a proper scene graph for image retrieval, image generation, and multi-modal applications is difficult. The conventional scene graph annotation interface is not easy to use and hard to revise the results. The automatic scene graph generation methods using deep neural networks only focus on the objects and relationships while disregarding attributes. In this work, we propose SGDraw, a scene graph drawing interface that uses object- oriented representation to help users interactively draw and edit scene graphs. SGDraw provides a web-based scene graph annotation and creation tool for scene understanding applications. To verify the effectiveness of the proposed interface, we conducted a comparison study with the conventional tool and the user experience study. The results show that SGDraw can help create scene graphs with richer details and describe the images more accurately than traditional bounding box annotations. We believe the proposed SGDraw can be useful in various vision tasks, such as image generation and retrieval. The project source code is available at https://github.com/zty0304/SGDraw.

Framework:

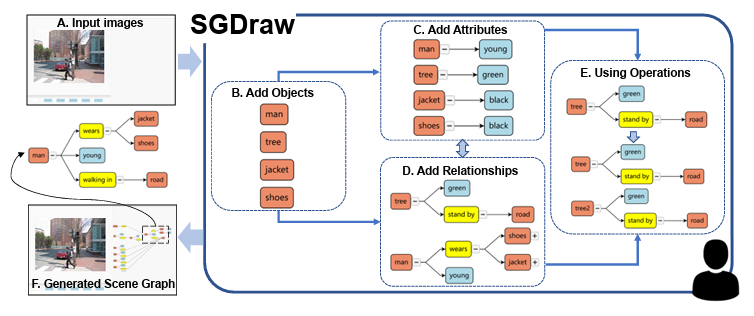

Workflow of the proposed interface. (A) With a task image inputted to the SGDraw, the user starts (B) adding objects, and then they can (C) add attributes or (D) relationships. After that, they can choose to (E) use the auxiliary operations (like cloning, as shown in the figure) to change the structure of the graph. Repeat these steps until (F) obtains the desired scene graph.

Recommended citation: Tianyu Zhang, **Xusheng Du**, Chia-Ming Chang, Xi Yang, Haoran Xie.